The sophistication of autonomous systems currently being developed across various domains and industries has markedly increased in recent years, due in large part to advances in computing, modeling, sensing, and other technologies. While much of the technology that has enabled this technical revolution has moved forward expeditiously, formal safety assurances for these systems still lag behind.

This is largely due to their reliance on data-driven machine learning (ML) technologies, which are inherently unpredictable and lack the necessary mathematical framework to provide guarantees on correctness. Without assurances, trust in any learning enabled cyber physical system’s (LE-CPS’s) safety and correct operation is limited, impeding their broad deployment and adoption for critical defense situations or capabilities.

To address this challenge, DARPA’s Assured Autonomy program is working to provide continual assurance of an LE-CPS’s safety and functional correctness, both at the time of its design and while operational. The program is developing mathematically verifiable approaches and tools that can be applied to different types and applications of data-driven ML algorithms in these systems to enhance their autonomy and assure they are achieving an acceptable level of safety. To help ground the research objectives, the program is prioritizing challenge problems in the defense-relevant autonomous vehicle space, specifically related to air, land, and underwater platforms.

The first phase of the Assured Autonomy program recently concluded. To assess the technologies in development, research teams integrated them into a small number of autonomous demonstration systems and evaluated each against various defense-relevant challenges. After 18 months of research and development on the assurance methods, tools, and learning enabled capabilities (LECs), the program is exhibiting early signs of progress.

“There are several examples of success on the program, but three in particular showed great progress on the air, land, and underwater demonstration platforms,” said Assured Autonomy program manager, Dr. Sandeep Neema. “Researcher collaborations led by evaluation teams at Boeing, Northrop Grumman, and the U.S. Army Combat Capabilities Development Command (CCDC) Ground Vehicle Systems Center working along with technology development teams, have successfully demonstrated technologies capable of providing assurances at design time, as well as operation time when the system and environment are evolving.”

Teams from the University of California, Berkeley, Collins Aerospace, and SGT Inc., working with Boeing, successfully demonstrated that their Assured Autonomy technologies in development are capable of provably enhancing system safety on an aircraft during ground operations. To help provide greater assurance at design time, the university team developed a toolkit for the design and analysis of ML-based systems driven by formal models and specifications called VerifAI.

VerifAI seeks to address challenges with applying formal methods to perception and ML components, and to model and analyze system behavior in the presence of environment uncertainty. To provide assurance during operations, the researchers also developed an “eyes-closed” safety kernel method. This method allows an autonomous system to detect an anomalous input, such as an obstacle in the path of an LE-CPS, and then determine an appropriate, safe response behavior.

The researchers integrated their tools with Boeing’s evaluation platforms, including an Iron Bird X-Plane simulation and a small test bed aircraft, and tested them against challenge problems relevant to ground operations, specifically assuring taxi operations on an airfield. The problems included centerline tracking and the detection and avoidance of obstacles on the runway during taxiing – important capabilities for unmanned aircraft that operate at airfields and on aircraft carrier decks. During the live aircraft exercise, the assurance methods were able to detect the presence of an obstacle during taxi, which triggered a safety method that identified and executed a safety response to route around the obstacle.

The assurance methods also detected when the camera feed was being noised or obscured, kicking-in a safety method that identified and executed what it deemed the safest response – stopping the aircraft until it could safely resume operations. Additionally, the tools were able to detect anomalies that could cause their LEC to misbehave, and allowed the system to maintain safe operations despite those anomalies. Further, the use of formal models and specifications provided assurances about the system’s safety both at design and run time.

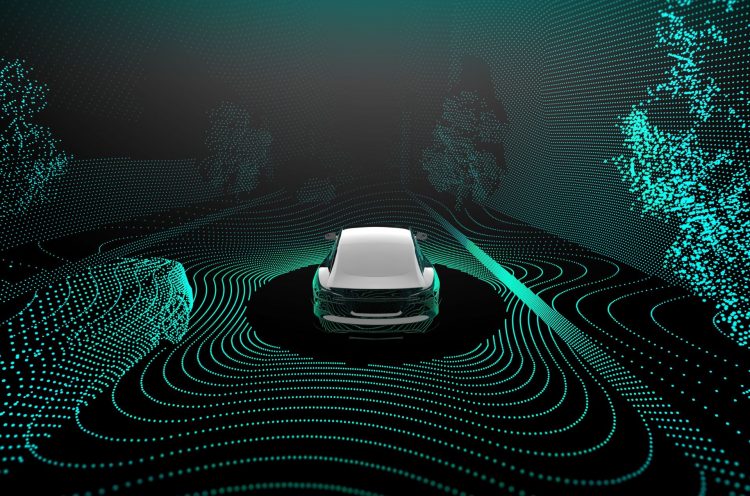

Outside of the realm of air operations, a team of researchers from HRL Laboratories successfully demonstrated their assurance tools on an autonomous military vehicle, the Polaris MRZR, working with the U.S. Army CCDC Ground Vehicle Systems Center. The researchers from HRL Laboratories developed a toolkit that uses mathematical reasoning to analyze AI systems to find and prevent safety failures by computing the circumstances that could cause bad outcomes – essentially determining when neural networks are unsafe to use. They used mathematical models of LECs with computer-checked proofs of safety, and a dynamic assurance monitor that measures a demonstration system’s deviation from the mathematical model.

To evaluate their tools effectiveness, the HRL Laboratories researchers initially used their tools to identify potential scenarios where an AI system would operate unexpectedly, or exhibit anomalous behavior. The researchers then fed their findings into a simulation to verify and demonstrate that the identified scenarios would indeed lead to unsafe behaviors. Following the simulation evaluation, the researchers worked with the CCDC Ground Vehicle Systems Center to integrate their toolkit and LECs on the Polaris MRZR for a physical system demonstration.

HRL’s tools were challenged to successfully control the MRZR through a planned path, and to navigate around obstacles. During the experiment, the researchers’ verified learning-based approach to LIDAR was able to classify points as “ground” vs. “non-ground,” or enable ground plane segmentation, which in turn enabled the system to identify obstacles to avoid in the pathway of the vehicle. The team’s approach is verified to satisfy mathematical correctness properties and demonstrated significant performance improvements over a baseline system.

Finally, two teams of researchers from Vanderbilt University and the University of Pennsylvania, collaborating with Northrop Grumman are working to address how autonomy-using ML techniques could improve mission effectiveness in the areas of vehicle control and perception for an autonomous undersea vehicle (AUV). The team is developing an LEC that allows an AUV to in situ monitor operating conditions, evaluate, assess, and then plan alternative courses of action in real time to meet mission objectives safely. The researchers at Vanderbilt University developed an integrated toolchain for the design and assurance of an LEC, called ALC.

ALC enables the development of a cyber-physical system with LECs that support architectural modeling, data collection, system software deployment, and LEC training, evaluation, and verification. The developed LEC and assurance technologies were integrated into an AUV demonstration platform and challenged to use ML to support system perception and control. Specifically, the challenge problem focused on assuring an AUV as it inspected a seafloor infrastructure, navigating along a set path without having previous exposure to a layout or map of the area.

“While each evaluation environment is distinctive, undersea environments present a unique set of challenges. In these environments, things move much more slowly, missions can take longer due to harsh environmental conditions and the limits of physics, and navigation/sensing/communications issues exacerbate the challenges. Advanced autonomy and assurance could significantly aid operations in the underwater domain,” noted Neema.

Imbued with “intelligent behavior,” the time and energy required for the AUV to complete the test mission were reduced significantly. What would previously require detailed, multi-step instructions, the LEC was able to navigate through the environment safely using just the basic guidance about the test mission. Using the LEC combined what would typically require multiple missions into one, reducing the need for human data analysis, and enabling optimal sensor resolution.

“While the Phase 1 tests demonstrate significant program progress, important work needs to be done in subsequent phases before these technologies become eligible for real-world deployment,” said Neema. “Work in Phase 2 will focus on maturation and scalability, improving coverage for hazard scenarios, adding robustness to environmental changes, and optimizing mitigating behavior in contingencies.”

for developers and enthusiasts